The Rise of the AI Camera Phone: From Lens to “Creative Engine”

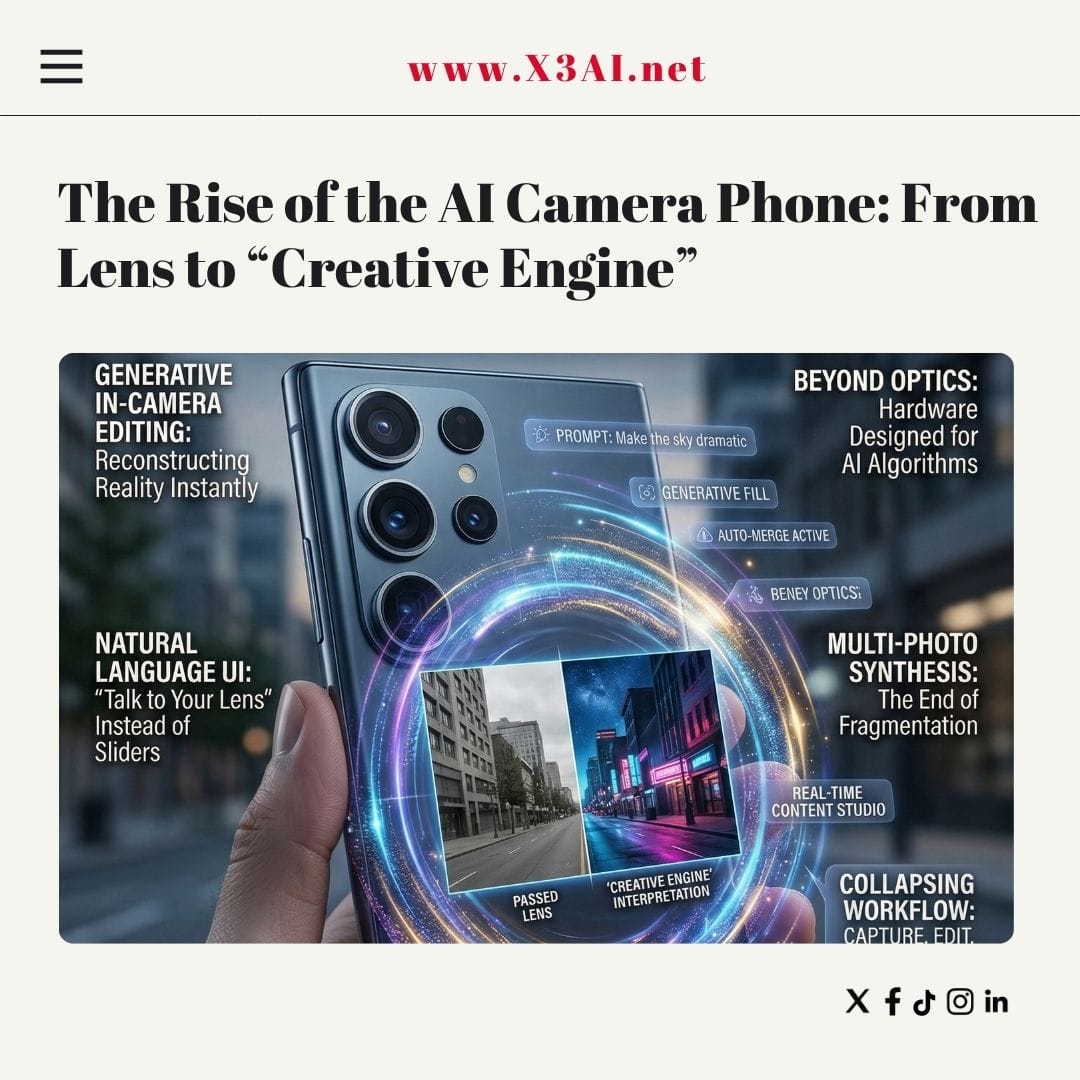

AI is rapidly transforming smartphone cameras from passive imaging tools into autonomous creative systems. The next wave of devices doesn’t just capture reality — it interprets, reconstructs, and even invents it. Evidence from recent flagship launches, manufacturer briefings, and technical previews shows that AI-centric camera systems with generative editing and unified workflows are moving from experimental features to default behavior.

Capture → Edit → Share Is Collapsing Into One Step

For years, mobile photography involved a fragmented pipeline: shoot in one app, edit in another, then export or share. That separation is disappearing.

Manufacturers are now building end-to-end AI camera platforms that unify capture, editing, and distribution inside the camera interface itself. Samsung’s latest Galaxy AI camera previews describe a system where users can:

- Edit photos using natural language

- Merge multiple images automatically

- Restore missing elements

- Transform lighting conditions (e.g., day → night)

- Share results instantly without app switching

These tools operate directly on the device and respond to conversational prompts, not manual sliders. (Samsung Mobile Press)

In practical terms, the camera app is becoming a real-time content studio rather than a shutter button.

Generative Editing Is Moving In-Camera

Generative AI—once limited to cloud software—is now embedded in phone imaging pipelines.

Recent previews of upcoming Galaxy devices show capabilities such as:

- Reconstructing occluded or missing objects

- Creating panoramas from multiple shots automatically

- Re-lighting scenes after capture

- Converting daytime photos into nighttime scenes

Tasks that previously required professional software can now be completed “in minutes directly from the smartphone.” (PetaPixel)

Flagship releases in 2026 reinforce this shift. The Galaxy S26 series introduces text-driven editing (“Photo Assist”), generative creative tools, and AI upscaling integrated into the core camera experience. (Tom's Guide)

The key distinction: editing is no longer a post-processing step — it is part of capture.

Hardware Is Being Built Around AI, Not Just Optics

Traditional camera improvements focused on lenses, sensors, and megapixels. Modern flagships still push those boundaries — for example, a 200-megapixel primary sensor with advanced low-light performance in the S26 Ultra — but hardware increasingly serves AI algorithms rather than the other way around. (Tom's Guide)

Dedicated neural processors and image signal processors now enable:

- Real-time scene understanding

- Multi-frame synthesis

- Subject tracking and stabilization

- On-device image generation

- Context-aware adjustments

Chipmakers emphasize that AI camera features can automatically recognize scenes, track motion, and optimize settings dynamically during capture. (Qualcomm)

The result is an imaging system that continuously computes the “best” version of a scene rather than recording a single exposure.

Natural Language Becomes the New User Interface

A major breakthrough is not just capability but usability. Companies recognize that complex editing tools limit adoption.

New AI camera systems increasingly accept plain-language instructions:

- “Make the sky dramatic”

- “Remove people in the background”

- “Turn this into a night scene”

- “Merge these photos”

Samsung’s latest devices emphasize conversational editing and automated workflows designed to feel intuitive rather than technical. (Wall Street Journal)

This marks a shift from manual craftsmanship to intent-driven creation.

Multi-Photo Synthesis and Computational Reality

Modern smartphones capture far more data than a single image suggests. AI combines frames, sensors, and time into one synthesized result.

Emerging techniques include:

- Merging shots from different cameras into one image

- Filling blurred or out-of-focus regions

- Generating all-in-focus photos from multiple inputs

- Building panoramas or composites automatically

Research shows that multi-camera smartphones can synthesize high-quality images by combining information from wide and ultra-wide sensors to restore detail and sharpness. (arXiv)

In effect, phones are producing images that were never captured in a single instant.

From “Camera Phone” to Personal Content Engine

Taken together, these developments suggest a broader transformation:

The smartphone camera is becoming an AI-native media system.

Key characteristics of this new paradigm:

- Unified workflow: Capture, edit, generate, and share in one interface

- Generative capability: Create new visual content, not just enhance existing shots

- Context awareness: Understand subjects, lighting, and intent

- Automation: Reduce technical skill requirements

- On-device intelligence: Perform complex tasks without cloud dependency

Manufacturers explicitly frame this evolution as enabling anyone to create cinematic photos or videos regardless of expertise. (Samsung Mobile Press)

What This Means for Photography Itself

As AI assumes more of the decision-making, the definition of photography is shifting from documentation toward interpretation.

Future images may be:

- Part capture

- Part reconstruction

- Part generation

Instead of asking “Did the camera see this?”, the more relevant question may become “Is this the image the user intended?”

The Bottom Line

AI-centric smartphone cameras are advancing at extraordinary speed. Generative editing, natural-language control, and unified capture workflows are not speculative concepts — they are already appearing in flagship devices and manufacturer roadmaps.

The camera is no longer just a sensor behind glass. It is becoming a real-time creative collaborator — one that can reshape reality as easily as it records it.

If you want, I can also produce a future forecast (2026–2030) of smartphone imaging trends or compare how Apple, Samsung, Google, and Chinese OEMs are approaching AI cameras differently.

AI is rapidly transforming smartphone cameras from passive imaging tools into autonomous creative systems. The next wave of devices doesn’t just capture reality — it interprets, reconstructs, and even invents it. Evidence from recent flagship launches, manufacturer briefings, and technical previews shows that AI-centric camera systems with generative editing and unified workflows are moving from experimental features to default behavior.

Capture → Edit → Share Is Collapsing Into One Step

For years, mobile photography involved a fragmented pipeline: shoot in one app, edit in another, then export or share. That separation is disappearing.

Manufacturers are now building end-to-end AI camera platforms that unify capture, editing, and distribution inside the camera interface itself. Samsung’s latest Galaxy AI camera previews describe a system where users can:

- Edit photos using natural language

- Merge multiple images automatically

- Restore missing elements

- Transform lighting conditions (e.g., day → night)

- Share results instantly without app switching

These tools operate directly on the device and respond to conversational prompts, not manual sliders.

In practical terms, the camera app is becoming a real-time content studio rather than a shutter button.

Generative Editing Is Moving In-Camera

Generative AI—once limited to cloud software—is now embedded in phone imaging pipelines.

Recent previews of upcoming Galaxy devices show capabilities such as:

- Reconstructing occluded or missing objects

- Creating panoramas from multiple shots automatically

- Re-lighting scenes after capture

- Converting daytime photos into nighttime scenes

Tasks that previously required professional software can now be completed “in minutes directly from the smartphone.”

Flagship releases in 2026 reinforce this shift. The Galaxy S26 series introduces text-driven editing (“Photo Assist”), generative creative tools, and AI upscaling integrated into the core camera experience.

The key distinction: editing is no longer a post-processing step — it is part of capture.

Hardware Is Being Built Around AI, Not Just Optics

Traditional camera improvements focused on lenses, sensors, and megapixels. Modern flagships still push those boundaries — for example, a 200-megapixel primary sensor with advanced low-light performance in the S26 Ultra — but hardware increasingly serves AI algorithms rather than the other way around.

Dedicated neural processors and image signal processors now enable:

- Real-time scene understanding

- Multi-frame synthesis

- Subject tracking and stabilization

- On-device image generation

- Context-aware adjustments

Chipmakers emphasize that AI camera features can automatically recognize scenes, track motion, and optimize settings dynamically during capture.

The result is an imaging system that continuously computes the “best” version of a scene rather than recording a single exposure.

Natural Language Becomes the New User Interface

A major breakthrough is not just capability but usability. Companies recognize that complex editing tools limit adoption.

New AI camera systems increasingly accept plain-language instructions:

- “Make the sky dramatic”

- “Remove people in the background”

- “Turn this into a night scene”

- “Merge these photos”

Samsung’s latest devices emphasize conversational editing and automated workflows designed to feel intuitive rather than technical.

This marks a shift from manual craftsmanship to intent-driven creation.

Multi-Photo Synthesis and Computational Reality

Modern smartphones capture far more data than a single image suggests. AI combines frames, sensors, and time into one synthesized result.

Emerging techniques include:

- Merging shots from different cameras into one image

- Filling blurred or out-of-focus regions

- Generating all-in-focus photos from multiple inputs

- Building panoramas or composites automatically

Research shows that multi-camera smartphones can synthesize high-quality images by combining information from wide and ultra-wide sensors to restore detail and sharpness.

In effect, phones are producing images that were never captured in a single instant.

From “Camera Phone” to Personal Content Engine

Taken together, these developments suggest a broader transformation:

The smartphone camera is becoming an AI-native media system.

Key characteristics of this new paradigm:

- Unified workflow: Capture, edit, generate, and share in one interface

- Generative capability: Create new visual content, not just enhance existing shots

- Context awareness: Understand subjects, lighting, and intent

- Automation: Reduce technical skill requirements

- On-device intelligence: Perform complex tasks without cloud dependency

Manufacturers explicitly frame this evolution as enabling anyone to create cinematic photos or videos regardless of expertise.

What This Means for Photography Itself

As AI assumes more of the decision-making, the definition of photography is shifting from documentation toward interpretation.

Future images may be:

- Part capture

- Part reconstruction

- Part generation

Instead of asking “Did the camera see this?”, the more relevant question may become “Is this the image the user intended?”

The Bottom Line

AI-centric smartphone cameras are advancing at extraordinary speed. Generative editing, natural-language control, and unified capture workflows are not speculative concepts — they are already appearing in flagship devices and manufacturer roadmaps.

The camera is no longer just a sensor behind glass. It is becoming a real-time creative collaborator — one that can reshape reality as easily as it records it.