From “Don’t Break the World” to “Build the Future”: Why Global AI Summits Are Pivoting to Impact

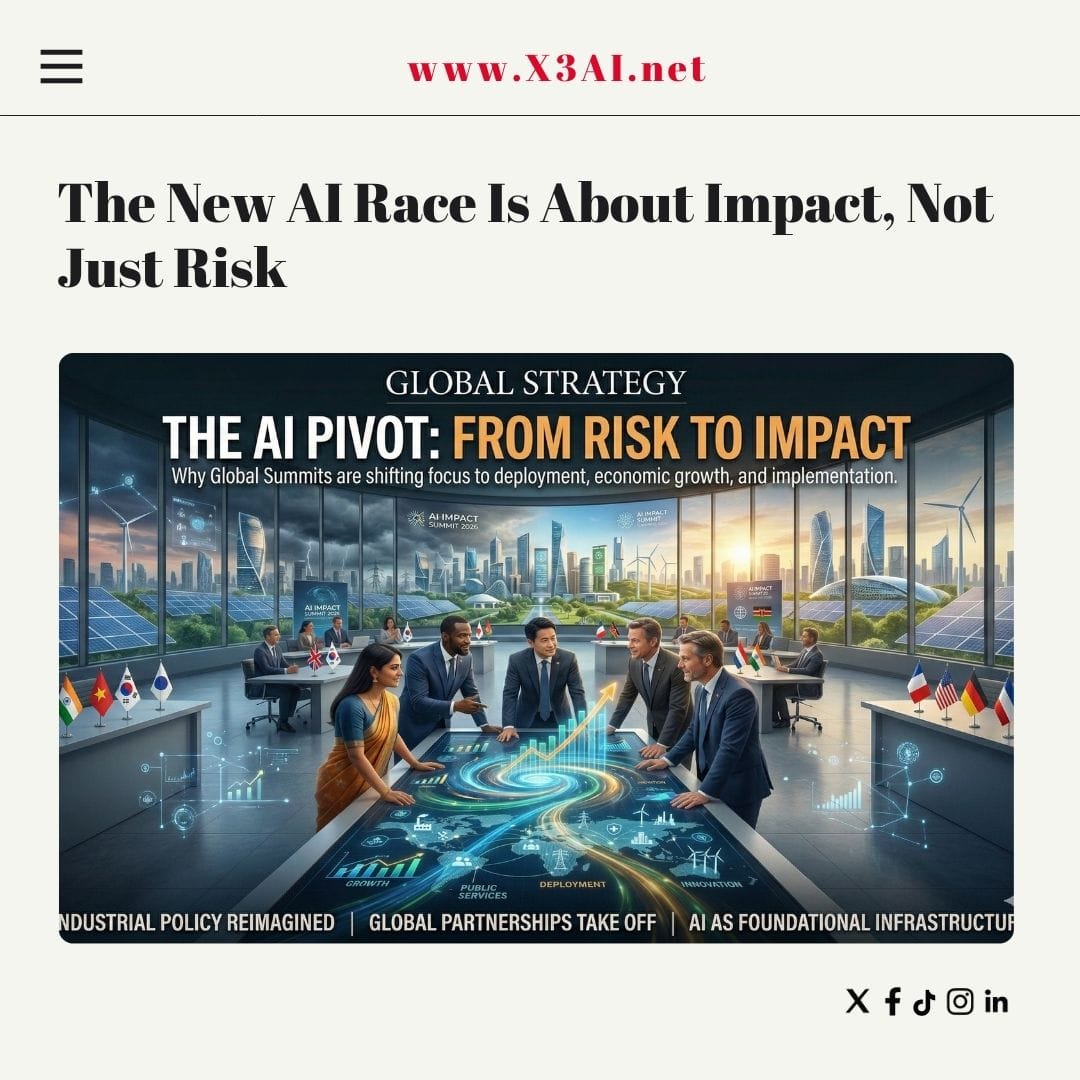

For years, the world’s biggest artificial intelligence gatherings sounded like emergency meetings: catastrophic risk, existential threats, runaway systems. Today, the tone has changed. Across continents, global AI summits are increasingly focused on deployment, economic growth, public services, and measurable outcomes — not just hypothetical dangers.

This shift is not speculation. It is visible in the evolution of summit agendas, official declarations, investment plans, and partnerships announced at recent events.

A Clear Timeline: Safety → Action → Impact

The change is easiest to see in the naming and framing of successive high-level summits.

- 2023 — UK AI Safety Summit (Bletchley Park): centered on catastrophic risks and governance

- 2024 — AI Seoul Summit: balanced safety with innovation and inclusivity

- 2025 — Paris AI Action Summit: emphasized implementation and economic opportunity

- 2026 — India AI Impact Summit: focused on real-world outcomes and scaling adoption

According to official summaries, even the titles themselves reflect a deliberate shift. The 2026 summit explicitly prioritised “practical impact, implementation, and measurable outcomes.” (Wikipedia)

At the Paris meeting, leaders reframed the conversation toward innovation and economic potential while still acknowledging risks — a notable departure from earlier alarm-driven discourse. (Wikipedia)

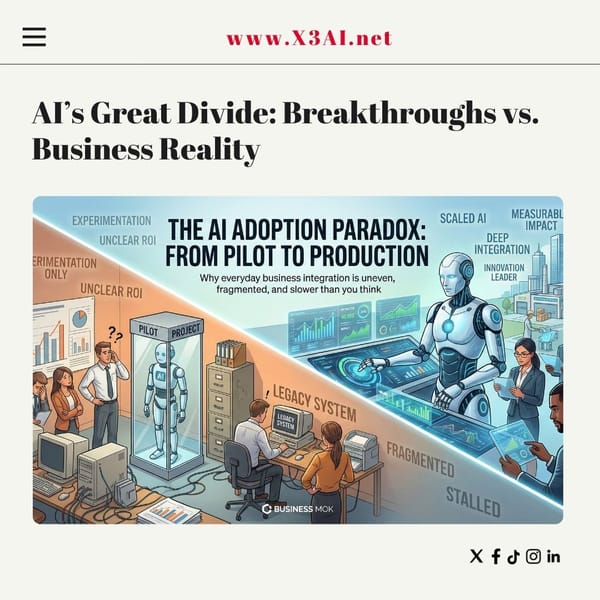

From Hypothetical Risk to Real Deployment

Early gatherings concentrated on scenarios such as loss of human control, misinformation, or autonomous weapons. Those concerns remain, but newer forums increasingly ask a different question:

How do we use AI now to deliver value at scale?

At the 2026 Impact Summit, working groups targeted areas like:

- Economic growth and productivity

- Social inclusion and empowerment

- Human capital development

- Resilience and efficiency

- Democratization of AI resources

These themes signal a move from precaution to execution. (Wikipedia)

Similarly, the UN-backed AI for Good Global Summit aims to deploy AI to solve concrete global challenges — from climate and healthcare to infrastructure and education — emphasizing partnerships, skills, and standards rather than abstract risk alone. (AI for Good)

Governments Now Treat AI as Industrial Policy

Another major change: AI summits increasingly function as economic strategy platforms.

At the Paris Action Summit, governments rallied around “national champions” in AI and major investment programs. (Wikipedia)

The European approach likewise emphasizes boosting industrial capacity alongside trust and safety — framing AI as a competitiveness issue as much as a regulatory one. (Digital Strategy)

Large funding announcements reinforce this direction. For example, Europe unveiled plans for massive public investment in AI infrastructure and “gigafactories,” signaling that leadership in AI is now viewed as strategic economic power. (Wikipedia)

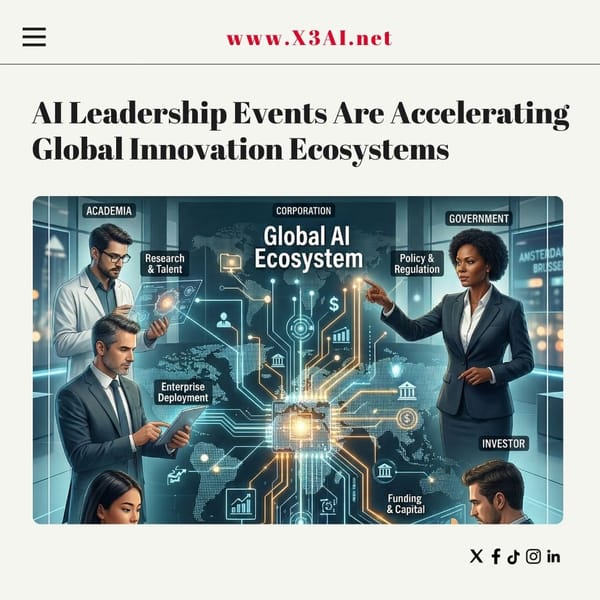

Partnerships Over Principles

Recent summits are producing concrete collaborations rather than only declarations.

At the 2026 Impact Summit, India, Kenya, and Italy launched a trilateral initiative to deploy sovereign AI solutions across Africa, aiming for scalable digital transformation and local empowerment. (The Economic Times)

Such initiatives reflect a new emphasis on implementation in emerging markets — where AI is seen as a tool for development, not just disruption.

Why the Shift Happened

Several forces are driving the move from safety rhetoric to impact agendas:

1) AI Is Already Everywhere

Generative AI and automation are no longer experimental. Governments and companies are deploying them across healthcare, finance, logistics, and public services.

2) Economic Competition Is Intensifying

Countries increasingly view AI leadership as analogous to past races for nuclear capability, space dominance, or semiconductor supremacy.

3) Public Expectations Have Changed

Citizens want visible benefits — better services, jobs, productivity — not only warnings about future risks.

4) Safety Without Deployment Has Limits

Policymakers now recognize that governance frameworks must coexist with innovation or risk stifling progress.

Safety Has Not Disappeared — It Has Been Repositioned

Importantly, safety discussions continue. Reports on risks, standards, and governance still accompany major summits. The International AI Safety Report, for instance, remains a central reference for policymakers. (Wikipedia)

But safety is increasingly framed as an enabler of adoption, not a reason for restraint.

At the Seoul summit, leaders explicitly discussed innovation, inclusivity, and job creation alongside risk mitigation — signaling the transitional phase between caution and deployment. (Reuters)

The New Global Narrative: AI as Infrastructure

Taken together, recent summits suggest a reframing of artificial intelligence:

From existential technology → to foundational infrastructure

AI is now discussed in the same breath as energy systems, transport networks, or digital connectivity — essential to national development and geopolitical influence.

What Comes Next

Future summits will likely focus on:

- Scaling AI in public services

- Workforce transformation

- Cross-border data and compute alliances

- Supply chains for chips and energy

- Responsible but rapid deployment

In short, the question has evolved from “Should we build this?” to “Who will build it fastest — and how?”

Bottom Line

Global AI summits are no longer primarily crisis councils. They are becoming launchpads for policy, investment, and real-world implementation.

Safety sparked the conversation. Impact is now driving it.